The More Nonsense, the More Credible

Brandolini's law doesn't just make lies hard to refute. It turns incoherence into a competitive advantage.

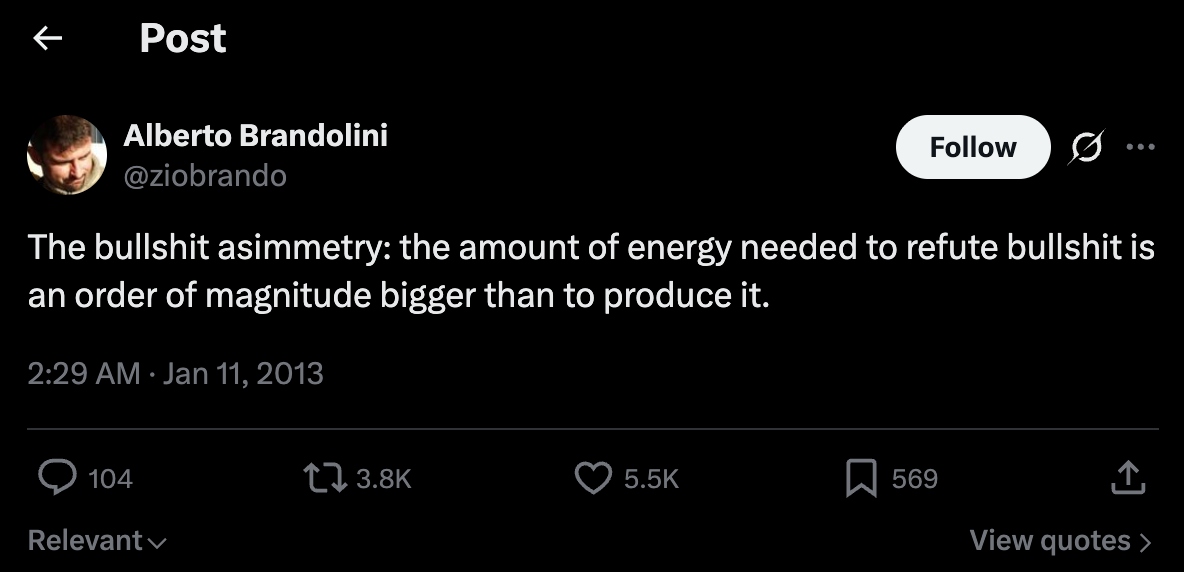

A colleague of mine recently put a name to a phenomenon that has been front and center in our work and in society recently. Brandolini’s law states that:

“The amount of energy needed to refute bullshit is an order of magnitude bigger than that needed to produce it.”

In other words, refutation costs more than fabrication. That asymmetry shapes everything that follows. Consider flat earthers and the traction they achieve with baseless claims, even against centuries of scientific evidence. In public health, consider the pervasive anti-vaccine movement, decades after vaccines eradicated deadly diseases.

In the political realm, this produces an odd pattern. Some of the most nonsensical policies and decisions get attributed to 3D chess. The simpler explanations, ignorance or malice, go unexamined.

I want to establish that these two things, the improper attribution of 3D chess genius and Brandolini’s law, are related. Understanding their relationship gives us a blueprint for dealing with habitual liars and scammers.

The mechanism behind the confusion

Brandolini’s Law creates 3D chess attributions. To see how, start with what happens when audiences encounter incoherence from someone they want to continue believing.

Prolific fabricators create exponentially expanding refutation burdens. Their audiences face an uncomfortable choice. They can do the expensive work to verify claims themselves, risking the discovery that they have been trusting someone untrustworthy. Or they can find an alternative explanation for their confusion that protects their existing beliefs and their self-respect.

This is where Brandolini’s Law does its damage. Verification costs real time and cognitive effort. Fabrication costs nothing. A prolific source produces claims faster than any individual can reasonably check them. Verification stops being a neutral option. It becomes a burden the audience has to decide whether to carry.

Most will not carry it. But they still need to resolve the confusion somehow.

This is not simple intellectual deference. It is something more specific. When audiences encounter incoherence from trusted sources, they face competing explanations for their confusion, and they reach for the one that costs them least.

Traditional authorities present complex information. Understanding it takes effort. When people distrust those authorities, complexity stops being a credential. It becomes a liability. The thinking becomes: “This is unnecessarily complicated because they’re trying to deceive me.”

But when trusted contrarian sources make claims that don’t hold up under scrutiny, believers face a different problem. The claims themselves are simple and appealing. The contradictory evidence comes from the same complex institutional sources they have already rejected. So believers must reconcile two uncomfortable facts: their trusted source’s claims don’t make logical sense, yet the rebuttals come from sources they have decided are untrustworthy.

The resolution splits in two. Institutional complexity gets framed as deliberate obfuscation: “They’re making it complicated to hide the truth.” Contrarian incoherence gets framed as strategic sophistication: “He’s playing chess while they’re playing checkers.” This lets believers maintain that they are smart enough to see through institutional deception while crediting their preferred source with genius-level strategic thinking that explains away obvious logical gaps.

The move isn’t “I don’t understand this, so it must be sophisticated.” It’s “I don’t trust the complex explanations from authorities, but I do trust this simple source, so when his claims seem contradictory, he must be operating on a level I can’t quite see.”

Look at what this accomplishes. Faced with a contradictory statement from a figure they trust, supporters have three options: acknowledge the contradiction and lose faith in their judgment, invest significant effort to verify and possibly confirm the contradiction, or assume the apparent contradiction reflects strategic thinking beyond their comprehension.

The third option costs the least and preserves the most. It transforms confusion from a warning signal into confirmation of sophistication. The more incoherent the statement, the more sophisticated the attributed thinking required to explain it.

The flat earth movement demonstrates this process perfectly. It starts with distrust of scientific disciplines people don’t understand: astronomy and physics. Contrarian sources gain credibility through simplified anti-establishment messaging rather than science-based presentation. When these sources make baseless claims, believers get confused because the reasoning offered is nonsensical by design. There is no logical substance to explore, much less understand. But believers want to believe, so they explain away their confusion through the dual mechanism described above. Contradictory evidence gets dismissed as institutional conspiracy. The incoherent reasoning gets attributed to sophisticated truth-telling they are not smart enough to fully grasp. The contrarian source gets credited with playing chess while institutions play checkers.

The feedback loop

This creates a perverse incentive structure. Incoherence is not a bug. In this system, it is the product. Sources who produce clear, verifiable claims face constant fact-checking and pushback. Sources who produce simplistic nonsense get credited with playing 3D chess.

Once the 3D chess attribution takes hold, it becomes self-reinforcing. Every subsequent confusing statement adds to the perceived evidence of advanced strategic thinking. Believers develop a stake in maintaining the attribution, because abandoning it means acknowledging they were wrong not just about the most recent claim, but about their entire assessment of the source’s competence.

The result is a feedback loop where the most prolific creators of falsehoods receive the most intellectual credit. The more nonsense one produces, the more credible one becomes, as long as the premise stays simple enough for people to take on face value. The system rewards quantity over accuracy and confusion over comprehension.

This explains why obviously incoherent figures maintain devoted followings despite producing provably false claims. Their audiences are not consuming the claims as information to be verified. They are consuming the confusion as evidence of sophistication they cannot quite access.

None of this means supporters are unreachable. It does mean the standard approach, more evidence, more complexity, more institutional authority, is doing the opposite of what we think it’s doing. Every additional layer of complexity increases the refutation burden and strengthens the case for trust-preserving attribution. If we want to reach these audiences, we have to work against the asymmetry of Brandolini’s law, not feed it.

A blueprint for response

Understanding this mechanism suggests several practical responses to deal with habitual liars, and more importantly, their supporters.

First, recognize that simplicity is key. The people who consume fake science and other dubious claims are not interested in, nor do they have the capacity to absorb, the complex arguments we might be tempted to press on them to bring them to their senses. Make your counter-arguments simple and make them clear. That also reduces the refutation burden.

Second, certainty is paramount. Science rightly prides itself on its ability to question its findings and constantly challenge itself, but the reasons why that matters are lost on this crowd. Any equivocation is seen as weakness, and is an implicit invitation for some charlatan to come along with a simpler, more certain explanation made up out of whole cloth. This does not mean inventing things we don’t believe or cannot demonstrate. We can have confidence in not knowing the answer to a question. What came before the big bang? Science does not know. What came after the big bang? Science does know, and we should be able to explain it simply and confidently.

Finally, and most importantly, we must come down from our high horses. The people who fabricate these lies are despicable. The people who fall prey to them are a different group. They are often people who have been lied to by their government, their politicians, and the companies that manufacture the products they consume. All those liars have historically been adept at justifying their lies with complicated-sounding explanations. So when someone comes along willing to explain things simply, plainly, and with confidence, some people are just relieved to finally be spoken to without condescension.

I will not use the term “dumbing down” to describe the blueprint here. These people are not dumb. They are not stupid. They are tired, they are busy, and mostly they are weary of intellectualism.

Lead with simplicity and empathy. But remember that the burden of proof still rests with the people making up the new theory. With that in mind, we might challenge a flat earther with this experiment. The next time the sun comes up in your time zone, call or text someone in another time zone, and ask them how long the sun has been up for them. If the earth were flat, the sun would illuminate the entire surface at the same time. A lamp hovering over a flat sheet of paper demonstrates that. When the flat earther’s friend in a different time zone reports a different sunrise time, they will have little choice but to recognize that their theory has a hole that has nothing to do with complicated physics or mathematics.

Not all theories are as easily disprovable as the flat earth, of course. But this blueprint can work. And even if it doesn’t fully convince people to change their view, it might open an important line of communication with people who may appear to have abandoned logical thinking, but have really just fallen prey to habitual liars and exploiters of Brandolini’s law.

Applicable to so many conversations right now.

Thanks for the insight and tools

The same reason smiling uses fewer muscles than frowning.